Case Study

LYlife MVP: 'From Idea to Test-Ready in ~30 Engineering Days'

How we took LYlife from idea to a test-ready MVP in about 30 focused engineering days—using a reusable playbook for fast, reliable validation that works beyond healthcare.

Launching a Minimum Viable Product that people can actually test, trust, and learn from is harder than it sounds. LYlife, a lymphedema management app, forced us to answer a simple question: how do you go from zero to a test-ready MVP in about 30 focused engineering days—without creating throwaway code?

This case study is not just about healthcare. It’s a reusable playbook for any serious MVP: AI-native products, SaaS tools, and data-sensitive apps that need real validation, not just a demo.

1. The real problem: validation, not “just shipping”

Most MVPs don’t fail because the technology is wrong. They fail because the team makes learning slow and expensive.

Typical patterns:

- The app “works” in a demo, but you can’t safely invite real users.

- Onboarding is weak, so the data you collect has no real meaning.

- Shipping a new test build feels like its own project.

- Validation is based on opinions and anecdotes, not on clear signals.

For LYlife, we reframed the goal:

Reduce the time from hypothesis to testable release, then learn from actual user behavior.

To do that, we treated four things as non‑negotiable from day one:

- Data integrity: onboarding has to happen before tracking, so every data point has context.

- Trustworthy access control: sensitive data must follow least‑privilege rules by default.

- Mobile‑first performance: slow, glitchy experiences kill adherence.

- Tight feedback loop: frequent, safe releases with clear metrics attached.

These constraints apply to any serious MVP—even if it’s in AI, fintech, or B2B SaaS.

2. What we optimized for: the speed-to-validation checklist

To validate an MVP quickly, you have to remove specific bottlenecks:

- Hidden integration drift between frontend and backend.

- Security rules scattered across different parts of the codebase.

- A slow delivery loop where every deploy feels special and risky.

- Unstable UX on real mobile networks, where testers see jitter instead of value.

- Over‑engineered UI components built too early instead of using proven building blocks.

- No measurement plan, so “validation” becomes a feeling instead of an outcome.

LYlife’s architecture is a set of countermeasures to those problems. The exact tools can change per project, but the principles stay the same.

3. System overview (without heavy jargon)

LYlife is a modern web app that behaves like an app you’d install on your phone. It runs from a single codebase and delivers a smooth, app‑like experience across devices.

We structured it in three clear layers:

- Experience layer: the screens and flows that users interact with.

- Application layer: a thin backend where business rules live and can evolve.

- Data & identity layer: managed storage, authentication, and permissions close to the data.

Around this, we applied three key ideas:

- One shared language for data. We define important data shapes once and reuse them across the system, so the interface and backend don’t drift apart.

- Server data treated as special. We handle loading, caching, and refreshing carefully, so the app feels stable even on weak mobile networks.

- Proven UI building blocks. Instead of reinventing menus, dialogs, and layout, we build on top of reliable primitives and focus energy on the product itself.

This isn’t about chasing a trendy stack. It’s about shortening iteration cycles and reducing regressions while the MVP is still taking shape.

4. How we made LYlife test-ready fast (without making it fragile)

Shipping fast is not about cutting corners. It’s about choosing defaults that reduce rework, prevent avoidable bugs, and keep the product stable enough to put in front of real people.

We used six guiding rules that we now apply to other MVPs as well.

4.1 One shared “language” for the product

Instead of having the frontend and backend speak slightly different dialects, we defined key data structures once and reused them everywhere.

Why it matters:

- Fewer surprises when a screen calls an API.

- Fewer broken flows during iteration.

- Faster changes, because assumptions stay aligned.

Rule of thumb: keep this shared language stable. If you need to make a breaking change, do it intentionally.

4.2 Security by default

LYlife works with health‑related data, so the safest behavior had to be the default. Access control lives close to the data, not just in the user interface or in a few backend checks.

Why it matters:

- You can add features without constantly worrying about new data leaks.

- Trust stays intact as the product grows.

- You avoid retrofitting security later, when it’s slower and more expensive.

Rule of thumb: keep permission rules readable. If they turn into a maze, mistakes and slowdowns are guaranteed.

4.3 Deployment that doesn’t become a project

We kept the backend intentionally lightweight and portable. Environment‑specific details are isolated at the edges; configuration is explicit.

Why it matters:

- Test builds ship quickly when something is ready.

- Fewer “blocked by infrastructure” moments.

- Less time spent on plumbing and more time learning from users.

Rule of thumb: if shipping a test build feels like an event, your validation loop is already too slow.

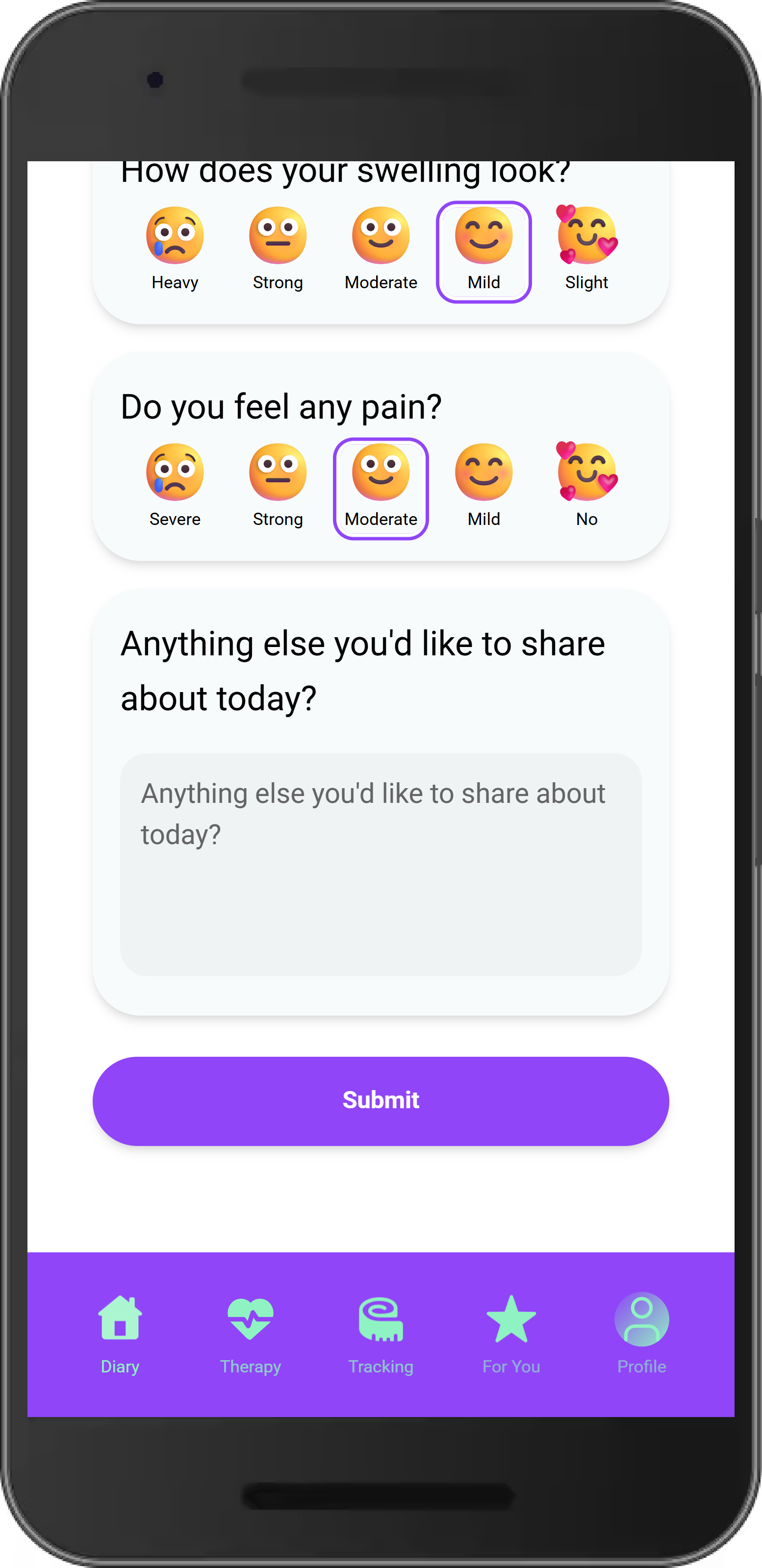

4.4 User experience that survives real networks

In the real world, networks are imperfect—especially on mobile. We treated loading, caching, and refresh rules as a core part of the product, not as an afterthought.

Why it matters:

- Fewer “it worked yesterday” bugs during testing.

- Less flakiness, which keeps testers focused on the product, not the glitches.

- A more predictable, habit‑friendly experience.

Rule of thumb: define what gets cached and when data refreshes, at least for the core flows.

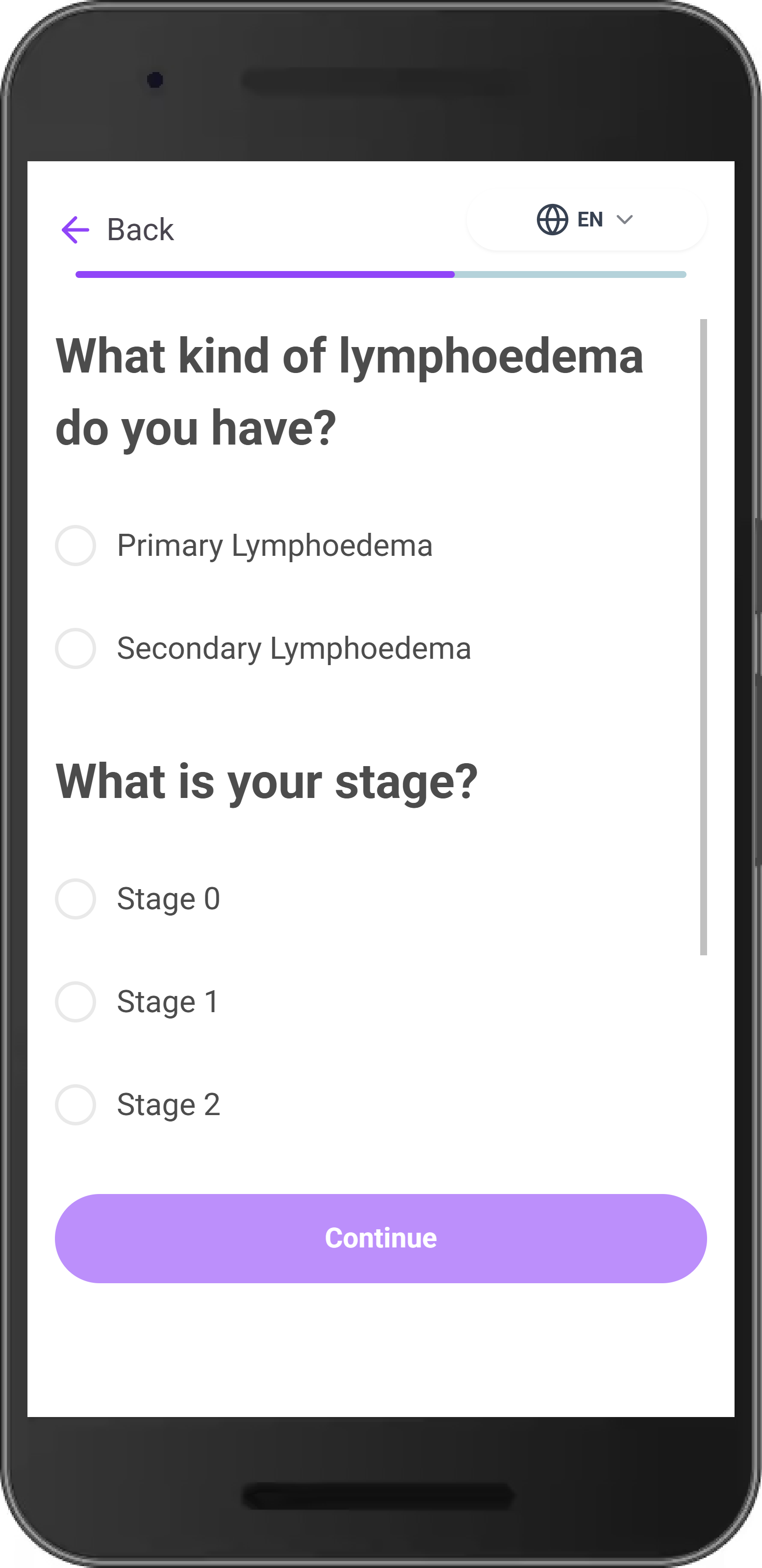

4.5 Workflow-first design

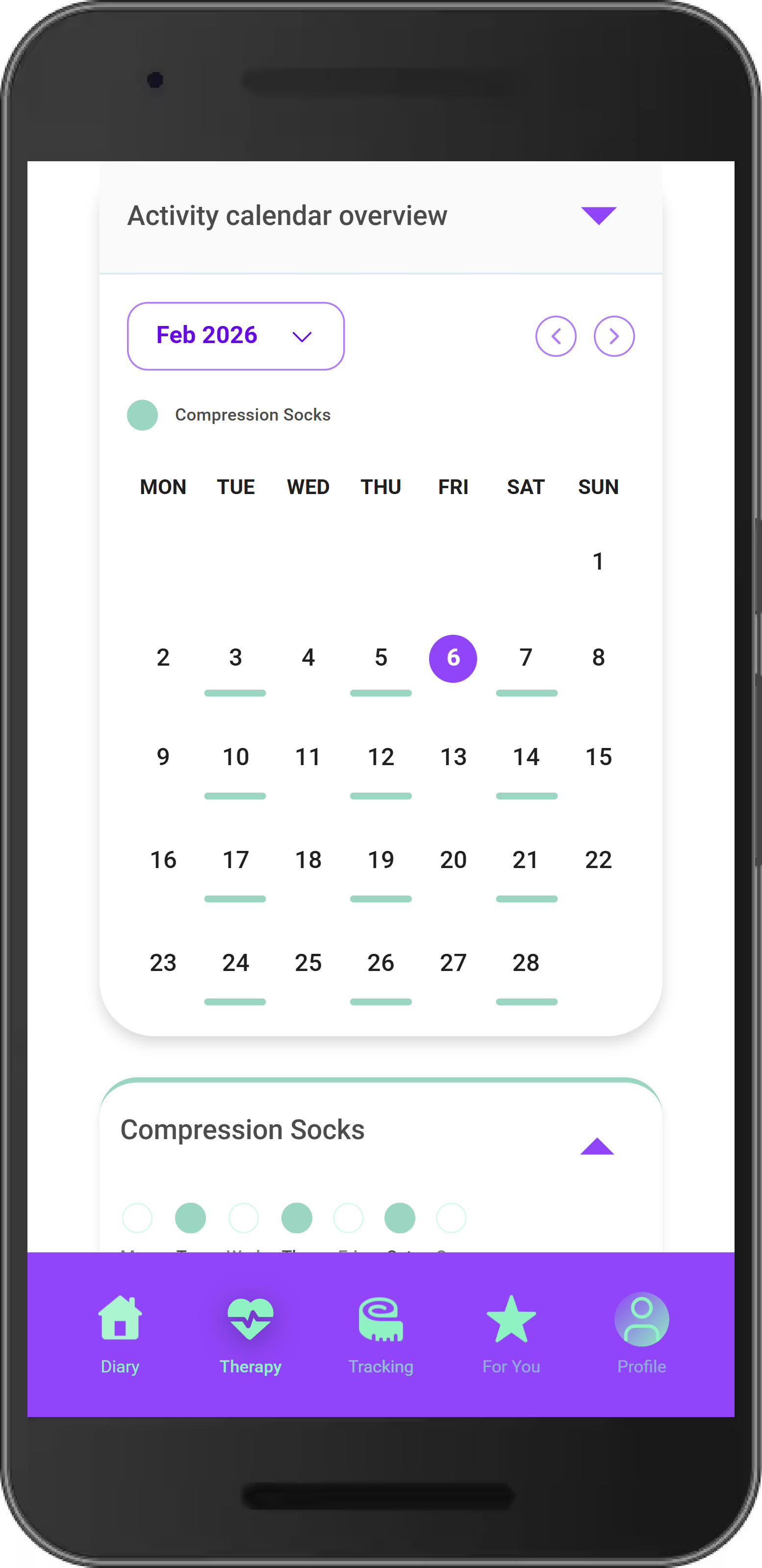

Routing is not just navigation. In LYlife, the whole app is a workflow: onboarding happens first, then tracking.

Why it matters:

- Early data is cleaner and more usable.

- Users understand what to do next, with less confusion.

- Feedback becomes about the product, not about getting lost in the interface.

Rule of thumb: treat the minimum onboarding fields like a contract and keep gate logic aligned with it.

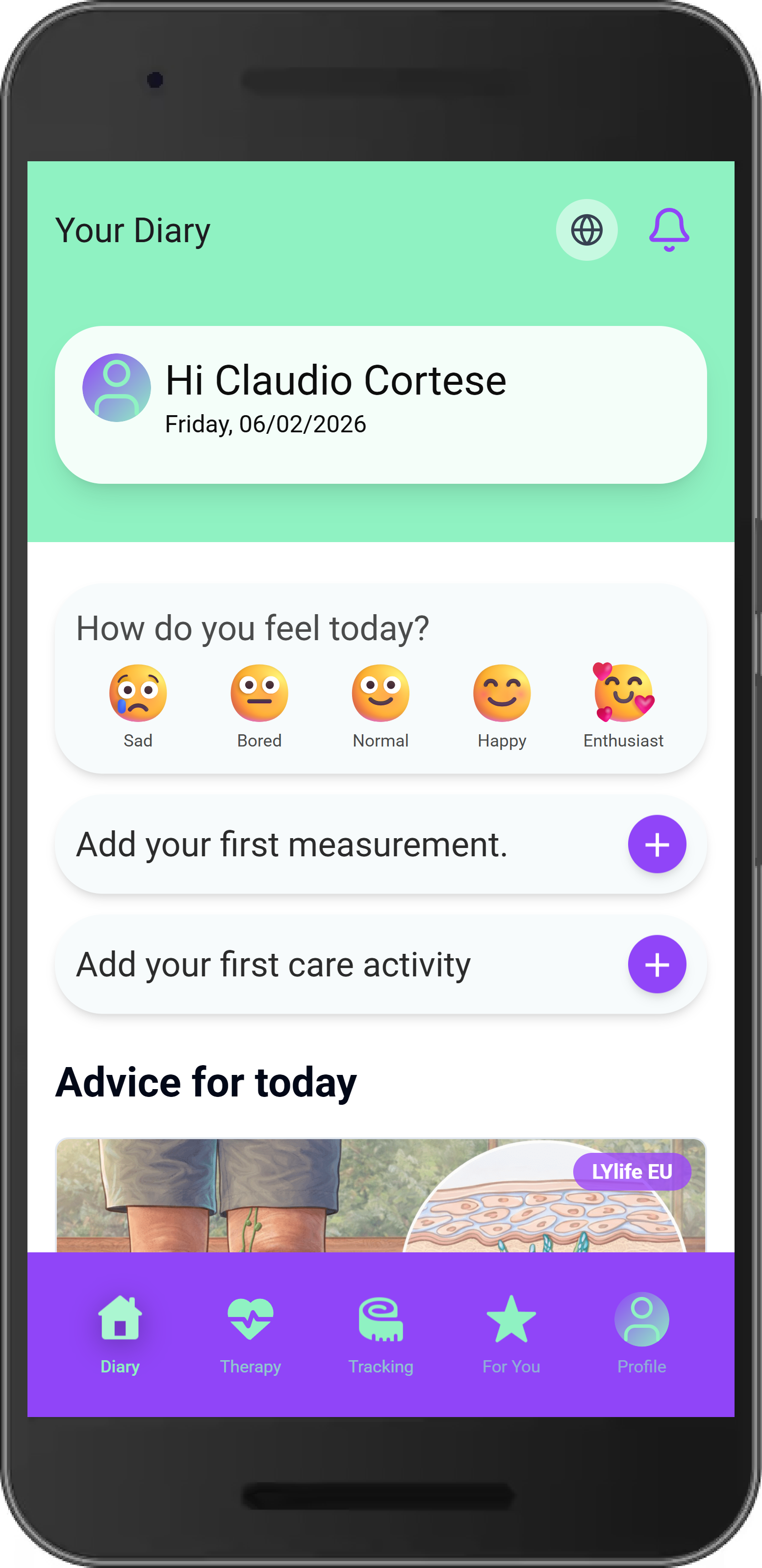

4.6 UI that looks “finished” early

We used a component approach that gives consistent behavior—menus, dialogs, focus handling, accessibility—while keeping styling flexible.

Why it matters:

- You don’t burn weeks reinventing basic interactions.

- You avoid a patchwork UI where every screen feels slightly different.

- Testers see a credible product experience, even in an early phase.

Rule of thumb: standardize key patterns early (especially modals and overlays) so the interface doesn’t fragment as features grow.

5. What “test-ready in ~30 days” looked like

We treated LYlife as a focused delivery sprint: around 30 engineering days of hands‑on build time from blank foundation to test‑ready product.

In plain terms, “test‑ready” meant:

- Onboarding flows were in place.

- The core tracking experience worked end‑to‑end.

- Data was stored and protected well enough to invite real users without anxiety.

We intentionally prioritized:

- Core workflows over secondary features.

- A stable data model that could evolve without constant breaks.

- Access rules that remained trustworthy as screens and endpoints grew.

- A coherent interface, so tests measured the product—not UI noise.

We intentionally postponed:

- Full offline‑first guarantees.

- Enterprise‑grade observability.

- Complex role hierarchies.

- “Perfect” visual polish everywhere.

The result: a credible, testable MVP in weeks—not quarters—with a clear path to hardening and scaling.

6. Measuring fast validation (instead of guessing)

Validation is a process, not a milestone. If you can’t put numbers around delivery speed and user behavior, you’re guessing.

We combined two small sets of metrics.

6.1 Delivery speed

We tracked:

- Change lead time: from code change to testable deployment.

- Deployment frequency: how often we shipped.

- Change failure rate: how many deployments caused problems.

- Time to restore service: how quickly we recovered from issues.

These metrics show whether learning is getting faster or slower. If every release becomes risky and painful, validation stops—even if you’re coding quickly.

6.2 Product-side MVP metrics

We chose three “brutal” early signals:

- Onboarding completion rate: do users reach the point where tracking is meaningful?

- Time‑to‑first‑entry: how quickly they log the first meaningful action after sign‑up.

- 7/14‑day retention: do they come back and keep using the product?

Each metric connects directly to a decision:

- Low onboarding completion → simplify the flow instead of adding features.

- Slow time‑to‑first‑entry → reduce steps and improve in‑product guidance.

- Weak retention → focus on trust, predictability, and habit‑forming moments.

On top of that, we ran short, structured interviews focused on friction points and moments of doubt (“Do I trust this?” “What did you expect to happen next?“).

7. Common mistakes that slow MVP validation

Across MVPs—in healthcare and beyond—we see the same mistakes:

- Overbuilding the backend too early, before real usage patterns exist.

- Keeping permissions only in application code instead of near the data.

- Letting scope creep quietly erode timelines (“just one more feature”).

- Skipping a measurement plan, so updates don’t translate into learning.

- Adding workflow gates (like “onboarding before tracking”) but not verifying them in practice.

Anything that increases rework, uncertainty, or fragility slows validation, no matter how fast the first version was coded.

LYlife was our way of designing those problems out from the start.

8. What happens next: from “test-ready” to “confident”

Once LYlife reached test‑ready, the goal changed. It wasn’t about adding more features; it was about increasing confidence.

We moved into a short hardening loop focused on the critical path:

- Onboarding → tracking → history on real devices and real networks.

- Targeted authorization tests to try to break user isolation.

- Performance passes on mobile for cold starts and interrupted sessions.

- Stronger reliability basics: logs, error boundaries, retries, and recovery paths.

- Baseline metrics for both delivery and product behavior, plus a discipline of small, frequent releases with quick rollback.

This is the same path we follow with new clients: ship what’s testable, learn from reality, and iterate without drama.

If you’re planning an MVP and want it to be test‑ready in weeks—not quarters—while staying trustworthy and adaptable, we can apply this playbook to your product too.

Next step

Ready for the next byte?

Turn this framework into a scoped MVP plan with a tight delivery loop.