MVP Pricing & Packaging: Test Willingness to Pay Early (2026 Guide)

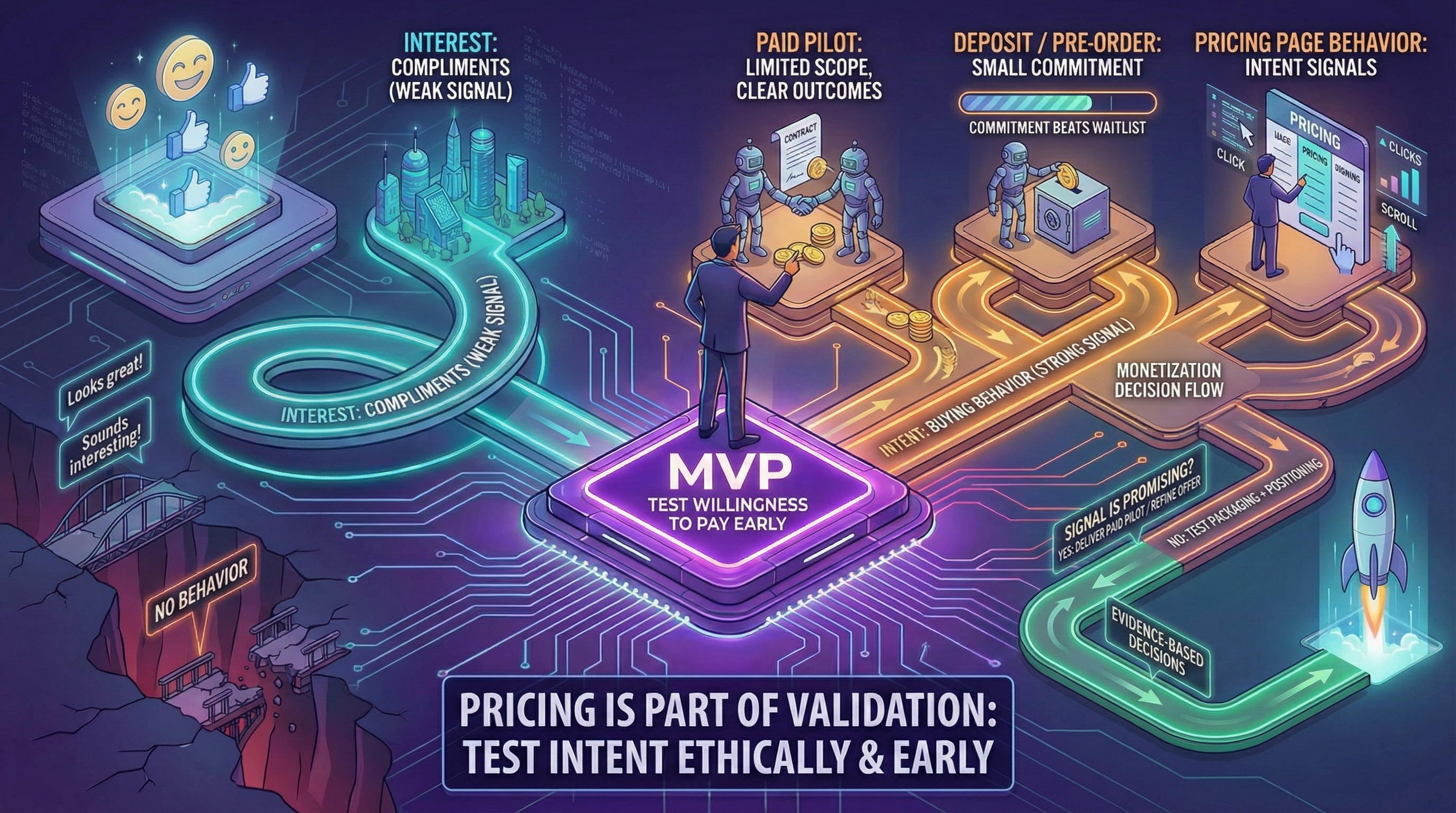

Skipping pricing until "later" is one of the most common MVP mistakes—and one of the most expensive. This guide shows how to test willingness to pay early with paid pilots, deposits, and structured offers, so you have real evidence before you commit to a pricing model.

Many MVPs avoid pricing until “later.” Later becomes never, and the product becomes a free toy.

You don’t need perfect pricing at MVP stage. You do need a credible test of willingness to pay.

This article shows how to test intent ethically and early—then feed that signal into your post-launch decision (see From MVP to product decisions).

Interest vs intent

Interest is compliments. Intent is behavior:

- asking about pricing

- requesting a quote

- agreeing to a paid pilot

- committing budget

Your MVP should test intent.

Pricing strategy framework for MVPs

Before you test pricing, choose your anchor.

Value-based pricing

Best for: B2B SaaS, outcome-driven products

Method: Price = (Value delivered - Alternative cost) × Capture rate

Example: If your tool saves 20 hours per month on recruiting, and agencies charge $5k/month, you could position at $2k/month (40% capture rate of alternative cost).

Cost-plus pricing

Best for: Resource-intensive services, marketplace MVPs

Method: Direct costs + Target margin (30-50% at MVP stage)

Risk: Ignores willingness to pay ceiling. You might leave money on the table or overprice.

Competitive pricing

Best for: Crowded markets, feature parity positioning

Method: Benchmark 3-5 direct competitors, position -20% to +30% based on differentiation

Risk: Race to bottom if not differentiated.

Which framework to choose?

B2B vs B2C considerations:

- B2B: Annual contracts (8-12x monthly pricing), payment terms (Net 30 common), procurement friction (budget cycles)

- B2C: Monthly subscriptions, instant payment (Stripe/PayPal), higher churn tolerance

The paid pilot playbook

A paid pilot is not a discount—it’s a structured validation experiment.

Pilot structure template

Scope definition:

- Duration: 4-8 weeks (not “ongoing”)

- Deliverables: 3-5 specific outcomes (not features)

- Success criteria: Measurable metrics both parties agree on

- Exit clause: Either party can terminate with 1 week notice

Example B2B pilot scope:

Product: Sales lead enrichment tool

Duration: 6 weeks

Deliverables:

- 500 leads enriched with job title + company size

- <5% error rate on firmographic data

- CSV export weekly

Success metric: 30% improvement in SDR connect ratePilot to contract conversion benchmarks

Industry data (2025-2026):

- B2B SaaS: 35-45% pilot → annual contract

- Marketplace: 20-30% pilot → recurring usage

- Enterprise: 50-65% (high due to procurement friction upfront)

What kills conversions:

- Unclear success criteria (pilot ends, no one knows if it worked)

- Scope creep (pilot becomes free consulting)

- No internal champion (user loves it, decision-maker never engaged)

Deposit vs waitlist: behavioral signal comparison

Real-world test (anonymized):

- Waitlist approach: 347 signups, 31 converted to paid (8.9%)

- $50 deposit approach: 43 deposits, 38 converted to paid (88.4%)

Waitlist = interest. Deposit = intent.

Avoiding false positives in pricing validation

Trap 1: “Would you pay?” questions

Hypothetical willingness ≠ actual behavior.

A 2024 study found 73% of surveyed users said they’d pay $20/month for a tool. Only 12% actually did when it launched.

Better approach: “If I offered this at $X today, would you start a paid trial this week?”

- Adds time pressure (week, not “someday”)

- Commitment action (trial, not abstract “buy”)

Trap 2: Vanity waitlists

Waitlist size is not a pricing signal unless:

- Users provide payment method (even if not charged yet)

- Deposit required (refundable is fine)

- Commitment action (pre-order, pilot agreement)

Otherwise: 90% drop-off at launch is normal.

Trap 3: Friends & family pricing

Never validate pricing with your network. They will:

- Overpay to support you

- Underpay because “friend discount”

- Give false positive feedback

Rule: Pricing validation requires strangers with budget authority.

Ethical boundaries: simulation vs deception

OK to simulate:

- Manual backend operations (Wizard of Oz MVP—see Types of MVPs)

- Delayed feature delivery (“coming in 2 weeks”)

- Small-scale service (concierge MVP)

NOT OK:

- Fake testimonials or metrics

- Claiming automation that doesn’t exist (for safety-critical features)

- Bait-and-switch pricing post-pilot

From pilot to product: decision checkpoints

Threshold metrics before public launch:

- B2B: 5-10 paid pilots with 40%+ conversion to annual contracts

- B2C: 50-100 paid users with <30% churn in Month 2

- Marketplace: 20+ transactions with 60%+ repeat rate

If you don’t hit thresholds, don’t launch broadly—run more pilots.

MVP packaging mistakes to avoid

Anti-pattern 1: Feature-based tiers

Bad example:

- Starter: 5 users, 10 projects

- Pro: 20 users, 50 projects

- Enterprise: Unlimited

Why bad: Users don’t buy “users” or “projects”—they buy outcomes.

Better approach:

- Validation Pack: Test with 50 leads/month

- Growth Pack: Scale to 500 leads/month

- Enterprise: Custom volume + SLA

Anti-pattern 2: Too many options

3 tiers = optimal (proven via preference tests)

5+ tiers = decision paralysis

Anti-pattern 3: “Contact us” pricing

Only use if:

- Deal size >$50k annually

- Custom implementation required per customer

- Legal/compliance varies significantly

Otherwise: Transparent pricing wins conversion.

Packaging: what users buy at MVP stage

At MVP stage, users buy outcomes. Keep the offer outcome-based and limited.

Think:

- “10 validated sales leads per week” (outcome)

- Not “Lead generation software with 47 features” (feature list)

Fast pricing tests you can run today

Paid pilot

Limited scope, clear outcomes. High-signal in B2B. See full playbook above.

Deposit / pre-order

Even a small commitment (€50-€200) beats a large waitlist. Proves intent.

Pricing page behavior

Not validation by itself, but reveals intent signals:

- Time spent on pricing page (>30 seconds = serious)

- CTA clicks (“Request demo” vs “Start trial”)

- Exit surveys (“Too expensive” vs “Not sure about value”)

Combine with actual paid actions for complete picture.

Conclusion

Pricing is part of validation, not a post-launch concern. Test intent early with simple experiments (paid pilots, deposits, structured offers), then decide the next move with evidence.

Remember:

- Interest (compliments, waitlists) ≠ Intent (deposits, pilots, budget commits)

- 5-10 paid pilots = minimum before broad launch

- Outcome-based packaging > feature-based tiers

- Transparent pricing > “Contact us” (for most MVPs)

Next: From MVP to product decisions—how to interpret your pricing signals and decide whether to scale, pivot, or iterate.