MVP vs Prototype vs PoC: What to Build and When (2026 Guide)

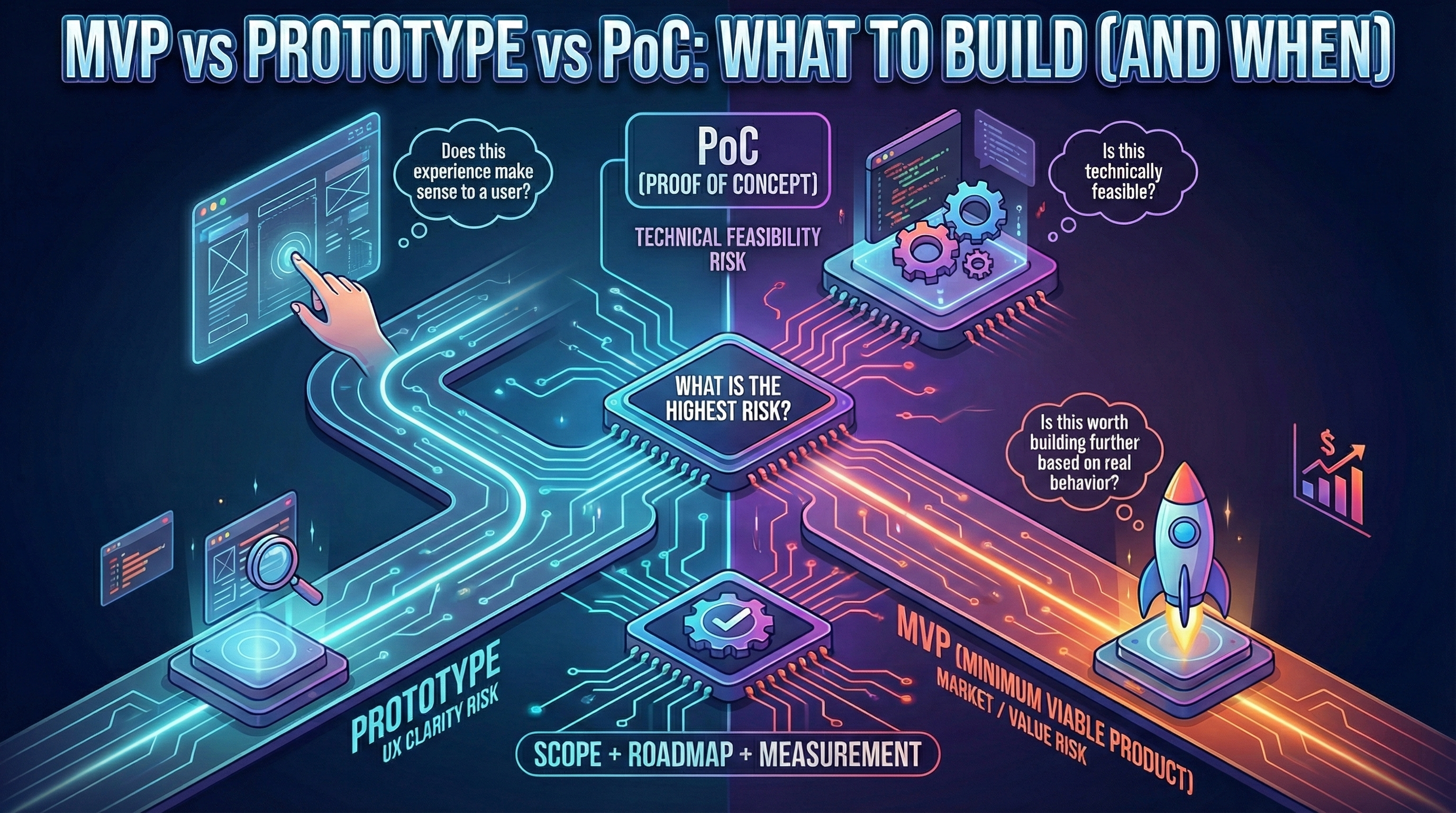

"MVP", "prototype", and "proof of concept" are used interchangeably—but they answer completely different questions. Picking the wrong one means validating the wrong risk. This guide clarifies the distinctions with real examples and helps you choose the right artifact for your situation.

“MVP”, “prototype”, and “proof of concept” are often used as if they mean the same thing. They don’t—and that confusion is expensive.

When teams pick the wrong artifact, they answer the wrong question. That leads to overbuilding, delayed learning, and the classic startup pain: months of effort with no clear signal.

If you’re building something new, this article will help you choose the right tool at the right time—then move to scoping and delivery with confidence (see How to Scope an MVP and the MVP roadmap).

The real difference is intent

Each artifact targets a different type of risk:

- Prototype: “Does this experience make sense to a user?”

- PoC (Proof of Concept): “Is this technically feasible?”

- MVP: “Is this worth building further—based on real behavior?”

If you build an MVP when the real risk is UX clarity, you’ll ship something confusing. If you build a prototype when the real risk is technical feasibility, you’ll validate a flow that can’t be delivered. If you build a PoC when the real risk is demand, you’ll burn weeks proving what doesn’t matter.

For more on choosing the right approach based on your specific situation, see our AI vs no-code vs low-code comparison.

Prototype: reduce UX risk quickly

A prototype is a learning tool for understanding and usability. It’s used to explore flows, test comprehension, and identify friction before you commit to implementation.

Typical characteristics:

- Low/medium fidelity (Figma, Framer, coded but non-functional)

- Often non-functional (clickable mockups, not real data)

- Fast to change (hours to adjust, not days)

Prototypes are great when:

- The user journey is complex (multi-step onboarding, conditional flows)

- You’re unsure what “first value” looks like (what’s the aha moment?)

- Onboarding and flow clarity are your biggest risks

Real example:

B2B analytics tool tested 3 dashboard layouts via Figma:

- Layout A: Calendar view (won user tests, 80% comprehension)

- Layout B: List view (70% comprehension)

- Layout C: Kanban board (40% comprehension, users confused)

Result: Built MVP with Layout A only. Saved 4 weeks vs building all 3.

Cost/Timeline: 1-2 weeks, €500-€2k (if outsourced design).

A good prototype helps you avoid building the wrong experience. But prototypes don’t prove value, retention, or willingness to pay.

PoC: reduce technical risk quickly

A proof of concept is a technical experiment built to answer feasibility questions such as:

- Can we integrate with X?

- Can we hit latency/performance targets?

- Can the approach work with real constraints?

Typical characteristics:

- Minimal UI (often CLI or basic HTML)

- Isolated scope (single feature, not full product)

- Often disposable (throwaway code, not production-ready)

PoCs are great when:

- Integrations are hard or risky (legacy APIs, third-party rate limits)

- You depend on an unknown third-party constraint (can Stripe handle our flow?)

- The “engine” is the main uncertainty (ML model accuracy, real-time sync feasibility)

Real example 1: Real-time sync PoC

Collaboration tool needed real-time document sync (Google Docs-style).

Question: Can we sync 10+ users editing simultaneously with <500ms latency?

PoC: Built basic Node.js + WebSocket prototype with 3 users, measured latency.

Result: 200ms latency at 10 users (feasible). Proceeded to MVP with confidence.

Cost/Timeline: 1-3 weeks, €2k-€5k.

Real example 2: Third-party API integration PoC

Fintech MVP needed Plaid integration for bank connections.

Question: Can we connect 3+ EU banks with acceptable error rates?

PoC: Simple script testing Plaid API with 5 banks, logging success/failure rates.

Result: 2 of 5 banks had 30%+ error rates (blocking issue). Pivoted to manual CSV upload MVP instead.

Cost/Timeline: 1 week, €1k-€2k.

But a PoC doesn’t validate demand. It validates feasibility.

MVP: validate value with real behavior

An MVP is a real product experiment that a real user can use to achieve a real outcome. It must be usable and measurable.

A true MVP connects:

- A specific user (not “everyone”)

- A specific problem (not “productivity”)

- A measurable outcome (activation, retention, revenue signal)

It is not “a smaller version of the final product.” It’s a test designed to produce a decision.

Real example: B2B sales automation MVP

Sales tool for automating LinkedIn outreach.

Core flow:

- User uploads lead list (CSV)

- Tool sends personalized LinkedIn messages

- User tracks responses in dashboard

What was built (6 weeks):

- CSV upload only (no CRM integrations yet)

- LinkedIn connection requests + 1 follow-up (no sequences yet)

- Basic dashboard (response rate, no analytics)

What was skipped (for v1.1):

- Email outreach

- CRM sync (Salesforce, HubSpot)

- Advanced analytics

- Multi-user teams

Result: 45% of users activated, 35% returned weekly, 8% requested paid pilot. Clear signal to iterate.

Cost/Timeline: 6-10 weeks, €8k-€35k (see pricing tiers).

For a detailed breakdown of what you get during this period, see What you get in 30-45 days with a studio.

Cost and timeline comparison

| Artifact | Timeline | Cost (if outsourced) | Deliverable | Risk Reduced |

|---|---|---|---|---|

| Prototype | 1-2 weeks | €500-€2k | Figma/Framer clickable mockups | UX clarity |

| PoC | 1-3 weeks | €2k-€5k | Throwaway code proving feasibility | Technical feasibility |

| MVP | 6-10 weeks | €8k-€35k | Functional product with measurement | Market/value validation |

When to combine:

- Prototype (2w) + MVP (8w) = 10 weeks total (if UX risk high)

- PoC (2w) + MVP (8w) = 10 weeks total (if technical risk high)

- Prototype (2w) + PoC (2w) + MVP (8w) = 12 weeks (if both risks high)

If you’re unsure whether to build yourself or partner with a studio, use our DIY vs partner scorecard to evaluate objectively.

Choose based on the highest risk

If you’re unsure, start by listing your top assumptions and selecting the one that would most likely kill the project if wrong. That assumption determines the artifact.

Typical sequences that work

There isn’t one “correct” order, but these sequences are common:

Sequence 1: Prototype → MVP (UX risk high)

When: Complex user journeys, novel interactions, B2C products.

Flow:

- Build Figma prototype (2 weeks)

- User test with 10 people (1 week)

- Refine based on feedback

- Build MVP with validated UX (6-8 weeks)

Example: Healthcare appointment booking tested 3 UX flows, built only the winner.

Sequence 2: PoC → MVP (technical risk high)

When: Integrations critical, real-time requirements, ML/AI features.

Flow:

- Build PoC proving feasibility (2-3 weeks)

- If successful, extract learnings

- Build MVP properly (don’t evolve PoC into production)

Example: Real-time collaboration tool PoC’d sync latency before committing to MVP.

Sequence 3: Prototype + PoC → MVP (both risks high)

When: Complex UX + technical unknowns.

Flow:

- Parallel: Prototype (UX) + PoC (tech) (2-3 weeks each)

- Validate both separately

- Combine learnings into MVP scope

Example: AI-powered design tool prototyped UX + PoC’d ML model accuracy simultaneously.

Sequence 4: Skip straight to MVP (risks low)

When: Standard UX patterns + proven tech stack + experienced team.

Flow:

- Jump to MVP (6-8 weeks)

- Measure and iterate

Example: B2B SaaS with CRUD operations, team built 5 MVPs before, no novel UX.

Transition playbook: from prototype/PoC to MVP

From Prototype to MVP

Extract:

- Validated user flows (what worked in testing)

- Drop-off points (where users got confused)

- Feature priorities (what users asked for first)

Rebuild properly:

- Don’t evolve prototype code into MVP (prototypes are optimized for speed, not maintainability)

- Start fresh with production-ready stack

- Keep only validated UX patterns

Timeline: Prototype learnings → MVP scoping (3-5 days) → MVP build (6-8 weeks).

From PoC to MVP

Extract:

- Proof that approach works (integration, latency, accuracy)

- Known constraints (API rate limits, performance bottlenecks)

- Libraries/services that worked (vs ones that failed)

Rebuild properly:

- Never ship PoC code to production (PoCs skip error handling, security, scalability)

- Use PoC as reference architecture only

- Build MVP with production patterns (logging, monitoring, tests)

Timeline: PoC validation → MVP scoping (3-5 days) → MVP build (6-8 weeks).

Teams that skip proper hardening often see early signs of technical debt within weeks.

When to skip steps

Skip Prototype when:

- Standard UX patterns (login, CRUD forms, dashboards)

- Team has deep domain experience (built 3+ similar products)

- Low UX risk (B2B internal tool with training, not consumer self-serve)

Skip PoC when:

- Proven tech stack (Next.js + Vercel + Supabase = well-trodden path)

- No critical integrations (pure CRUD, no third-party APIs)

- Team has built similar architecture before

Skip both (straight to MVP) when:

- Experienced team + standard patterns + low technical risk

- Time-to-market critical (competitive window closing)

- You’ve prototyped/PoC’d similar products before (learnings transfer)

Common mistakes

Mistake 1: Calling a prototype an MVP

Symptom: “We validated our MVP!” (showed Figma mockups to 10 users, got positive feedback).

Why it’s wrong: Prototype validates comprehension, not value or retention.

Fix: Prototype first, then build functional MVP and measure real behavior.

Mistake 2: PoC becomes production

Symptom: “Let’s just ship the PoC, it works!” (6 months later: crashes, security issues, can’t scale).

Why it’s wrong: PoCs skip production concerns (error handling, security, monitoring).

Fix: Treat PoC as disposable. Extract learnings, rebuild properly for MVP. This is especially important when every fix starts breaking something else—a sign you’ve shipped prototype code to production.

Mistake 3: Skipping measurement

Symptom: Built MVP, launched, no analytics, “users seem to like it.”

Why it’s wrong: MVP without measurement = expensive prototype.

Fix: MVP must have analytics from Day 1 (see metrics guide).

Mistake 4: Building all three sequentially when one would work

Symptom: Prototype (3w) + PoC (3w) + MVP (10w) = 16 weeks when MVP alone would suffice.

Why it’s wrong: Over-derisking burns time (opportunity cost).

Fix: Identify highest risk only, validate that, then build MVP.

Conclusion

Prototype, PoC, and MVP are complementary tools. Choose the one that answers your riskiest question fastest. Then move into scope and delivery with discipline.

Remember:

- Prototype = UX risk (1-2 weeks, €500-€2k)

- PoC = Technical risk (1-3 weeks, €2k-€5k)

- MVP = Market/value risk (6-10 weeks, €8k-€35k)

- Typical sequences: Prototype→MVP, PoC→MVP, both→MVP, or skip to MVP

- Never ship prototype/PoC code to production (rebuild properly)

Next step: How to scope an MVP—feature prioritization after choosing your path.

For strategic decisions about your next steps, see From MVP to product: key decisions.