MVP Roadmap: 4-8 Weeks from Discovery to Launch (2026 Timeline Guide)

A realistic MVP timeline is less about speed and more about discipline. This week-by-week roadmap covers discovery, build, and launch for different tech stacks—plus the common blockers that turn a 6-week plan into a 12-week slog and how to avoid them.

A realistic MVP timeline is less about speed and more about discipline. Many teams can build quickly, but they lose time through unclear scope, delayed decisions, and late measurement.

If you haven’t scoped yet, start with How to scope an MVP. If you haven’t clarified the problem, start with user interviews.

What this roadmap is (and isn’t)

This roadmap is:

- Focused on learning (not perfection)

- Built around short feedback loops

- Designed to ship a usable, measurable MVP

It is not:

- A rigid plan (adapt to your context)

- A race to launch (speed without measurement = waste)

- A substitute for clear scope (fix scope first, then follow roadmap)

Before week 1: minimum inputs

You need:

- One primary user (not “everyone”)

- One core problem (validated via interviews)

- One hypothesis (“We believe X solves Y, proven by Z”)

- One definition of success (see metrics)

If missing any of these, pause and fix. Otherwise, roadmap becomes drift.

Weeks 1-2: Discovery and scope lock

Goal: Reduce uncertainty and lock a buildable scope.

Week 1: Problem validation

Activities:

- Conduct 5-8 user interviews (see interview guide)

- Synthesize pain points (common patterns in 3+ interviews = real problem)

- Draft hypothesis (“For [user], [problem] solved by [approach], validated by [signal]”)

Output: Problem framing document (1 page: user, problem, hypothesis).

Week 2: Scope lock

Activities:

- Define happy path to first value

- Apply Must-Have filter (see scoping guide)

- Prioritize features (P0 = MVP, P1 = v1.1)

- Define baseline metrics (activation, retention, revenue intent)

- Set up analytics placeholder (Plausible, PostHog account creation)

Output:

- Locked scope (P0 features only)

- Buildable backlog (stories with acceptance criteria)

- Analytics plan (which events to track)

Common blocker: Co-founder/stakeholder adds features during Week 2. Fix: Defer to “Later” list, revisit post-launch.

Weeks 3-6: Build thin slices

Goal: Build end-to-end flows early (“thin vertical slices”), then improve what blocks launch.

Sprint 1 (Week 3-4): Core flow end-to-end

Activities:

- Build happy path skeleton (no polish, no edge cases)

- Basic auth (signup, login—use library like NextAuth, Firebase Auth)

- Core action (create, search, book, purchase—one action fully functional)

- Basic measurement (event tracking for signup, activation)

Output: Demo-able flow (founder can walk through entire journey).

Common blocker: Perfectionism (“UI isn’t pretty enough”). Fix: Accept ugly for Week 3-4. Polish comes Week 5-6.

Sprint 2 (Week 5): Usability + reliability

Activities:

- Add error handling (user-friendly messages, not 500 errors)

- Improve onboarding UX (reduce friction points from Week 4 testing)

- Add retention hooks (email confirmation, welcome email, first re-engagement nudge)

- Set up error monitoring (Sentry)

Output: Usable MVP (5 alpha users can complete core action without founder help).

Common blocker: Scope creep (“Let’s add feature X”). Fix: Feature freeze after Week 4. New ideas go to v1.1 backlog.

Sprint 3 (Week 6): Close launch blockers

Activities:

- Fix critical bugs (blocks core action)

- Performance baseline (Lighthouse >70 mobile)

- Security basics (HTTPS, input validation, rate limiting)

- Finalize analytics (test events fire correctly)

Output: Launch-ready MVP (passes launch checklist).

Common blocker: “Just one more feature.” Fix: If it wasn’t P0 in Week 2, it’s not critical. Launch without it.

Weeks 7-8: Launch and measure

Launch is the start of learning, not the end.

Week 7: Soft launch

Activities:

- Launch to 10-20 users (warm contacts, early waitlist)

- Monitor dashboard every 4 hours

- Fix critical bugs within 24h

- Interview 3-5 early users (“What almost stopped you?”)

Output: Activation rate baseline (% who complete core action).

Common blocker: No metrics setup. Fix: Analytics must be live before first user (see launch checklist).

Week 8: Iterate + broaden

Activities:

- Ship 2-3 hotfixes (biggest friction points from Week 7)

- Expand to 50-100 users (broader outreach, LinkedIn, communities)

- Weekly cohort analysis (Day 1 vs Day 7 retention)

- Decide next phase (iterate, pivot, scale—see decision framework)

Output: Decision signal (activation >30%, retention >25% = iterate; <20% = pivot or stop).

Common blocker: Perfectionism paralysis (“Let’s wait until Week 12”). Fix: 8 weeks is enough for first decision signal. Act on data.

Week-by-week breakdown by tech stack

Full-stack framework (Next.js + Vercel + Supabase)

Timeline: 6 weeks

- Week 1-2: Discovery + scope lock

- Week 3: Auth (NextAuth) + basic UI (Tailwind)

- Week 4: Core action (API routes + Supabase CRUD)

- Week 5: Error handling + onboarding polish

- Week 6: Launch prep (analytics, security, performance)

Why faster: Single codebase (frontend + backend), deploy in minutes (Vercel), built-in auth/DB (Supabase).

Backend-first (Node.js + PostgreSQL + React)

Timeline: 8 weeks

- Week 1-2: Discovery + scope lock

- Week 3: Backend API (Express routes + Postgres schema)

- Week 4: Frontend core pages (React + API integration)

- Week 5: Auth + state management (Redux/Context)

- Week 6: Error handling + retention hooks

- Week 7: Polish + performance

- Week 8: Launch + measure

Why slower: Separate frontend/backend deployment, more integration complexity.

Mobile-first (React Native + Firebase)

Timeline: 8 weeks

- Week 1-2: Discovery + scope lock

- Week 3: Expo setup + basic navigation

- Week 4: Core screens + Firebase integration (auth, Firestore)

- Week 5: Offline support + push notifications setup

- Week 6: Error handling + analytics (Firebase Analytics)

- Week 7: TestFlight beta (iOS) or internal test (Android)

- Week 8: Launch + measure

Why slower: Mobile-specific challenges (app store review, push notification setup, offline handling).

No-code hybrid (Webflow + Airtable + Make)

Timeline: 3 weeks

- Week 1: Discovery + scope lock (same as code-based)

- Week 2: Webflow landing page + Airtable base + Make workflows

- Week 3: Testing + launch

Why faster: Zero coding, visual builders, plug-and-play integrations.

Trade-off: Hits scaling limits at ~1k users, expensive beyond that.

See tech stack guide for choosing the right approach.

Common blockers by week (and fixes)

Week 2 blocker: Scope creep during planning

Symptom: “We also need feature X, Y, Z.”

Fix: Must-Have filter. If it’s not required for first value, it’s P1 (v1.1).

Week 4 blocker: Integration delays

Symptom: Stripe API documentation unclear, Twilio not working, waiting on third-party support.

Fix: Start integrations Week 3 (not Week 4). If blocked >2 days, manual workaround (e.g., manual invoicing instead of Stripe for first 10 users).

Week 6 blocker: Polish trap

Symptom: “UI isn’t perfect, let’s delay launch 2 weeks.”

Fix: If 5 alpha users complete core action, it’s good enough. Launch to 20, iterate based on feedback (not guesses).

Week 7 blocker: No users to launch to

Symptom: “We launched but no one signed up.”

Fix: Build waitlist during Week 1-6 (landing page, LinkedIn outreach, community posts). Launch should have 20+ people ready to try.

Acceleration tactics (when to use)

Tactic 1: Parallel design + dev

How: Designer works Week 1-2 on mockups while scope is locked. Developer starts Week 3 with finished designs.

Time saved: 1 week (no waiting for design handoff).

When to use: Team has dedicated designer + developer.

Tactic 2: Pre-built components

How: Use UI libraries (shadcn/ui, Chakra UI), auth libraries (NextAuth, Firebase), payment SDKs (Stripe Elements).

Time saved: 2-3 weeks (vs building from scratch).

When to use: Standard features (auth, payments, UI components). Don’t reinvent.

Tactic 3: Ruthless de-scoping

How: If feature is P0 but adds >5 days, ask: “Can we manual workaround for first 10 users?”

Examples:

- Manual onboarding emails (vs automated drip campaigns)

- Manual payment invoices (vs Stripe integration)

- CSV export only (vs PDF, Excel, JSON)

Time saved: 1-2 weeks per feature deferred.

When to use: Always. Automate post-validation, not pre-validation.

Tactic 4: Feature freeze discipline

How: Feature freeze Week 5. After that: bug fixes only, no new features.

Time saved: Prevents 2-4 week scope creep.

When to use: Every MVP, no exceptions.

When 4 weeks becomes 12 weeks (reality check)

Most timeline slips come from:

Cause 1: Unclear scope

Symptom: Week 4, team still debating which features are MVP.

Fix: Lock scope Week 2. No changes after that (exceptions: critical bugs only).

Cause 2: No measurement plan

Symptom: Week 7, scrambling to add analytics before launch.

Fix: Define events Week 2, implement tracking Week 5 (before launch prep).

Cause 3: Treating MVP like v1.0

Symptom: “Let’s add admin dashboard, team roles, CSV export, email templates…”

Fix: MVP = validation tool, not production-ready product. Manual workarounds acceptable.

Cause 4: Delayed decisions

Symptom: “We’ll decide on payment provider later” (then Week 6 arrives, no decision made).

Fix: Decision deadlines in roadmap (“Payment provider decided by end of Week 3”).

Real MVP examples with timelines

Example 1: EVOC (8 weeks)

Want to see exactly what an 8-week MVP looks like?

EVOC—Crowdsourcing Platform went from kickoff to test-ready in 8 weeks:

Weeks 1-2: Architecture + mobile reporting MVP (anonymous access, geolocation, media upload)

Weeks 3-4: Operator dashboard (review workflow, validation states, basic maps)

Weeks 5-6: Aggregation layer (heatmaps, filters, export to CSV/Excel)

Weeks 7-8: Pilot testing + hardening (error handling, performance, operator feedback)

Outcome:

- <2 min to submit a report

- 85% completion rate

- 120+ validated reports in first 2 weeks of pilot

- 40% faster operator review vs. manual triage

The case study includes trade-offs, architecture decisions, and the full hardening roadmap.

Example 2: LyLife (4 weeks)

For another example in healthcare domain with faster timeline:

LyLife case study—went from idea to functional MVP in 30 days using accelerated approach (pre-built components, ruthless de-scoping, parallel workflows).

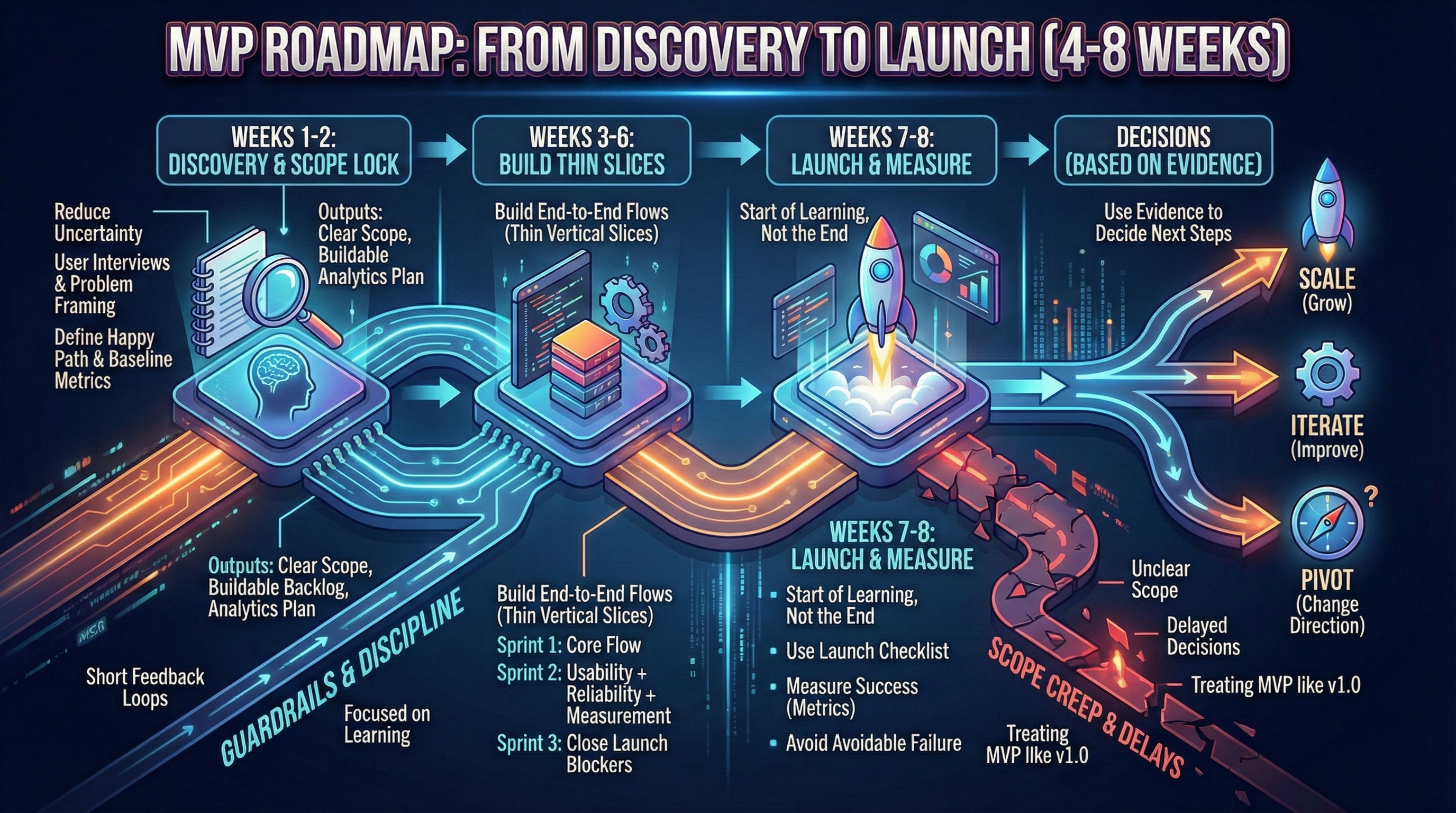

Roadmap visualization

This roadmap is built around one idea: ship something measurable, then use evidence to decide what happens next (see post-MVP decisions).

Conclusion

A disciplined 4-8 week MVP is realistic when:

- Scope is locked Week 2 (no changes after)

- Backlog stays buildable (P0 features only)

- Measurement is non-negotiable (analytics live before launch)

- Manual workarounds are embraced (automate post-validation)

Remember:

- Full-stack framework (Next.js): 6 weeks

- Backend-first or mobile: 8 weeks

- No-code hybrid: 3 weeks

- Common blockers: scope creep (Week 2), integration delays (Week 4), polish trap (Week 6)

- Acceleration: parallel design+dev, pre-built components, ruthless de-scoping, feature freeze

Next step after launch: How to measure MVP success—what to track and when to decide.