Why Crowdsourcing Platforms Fail (And How to Fix Them)

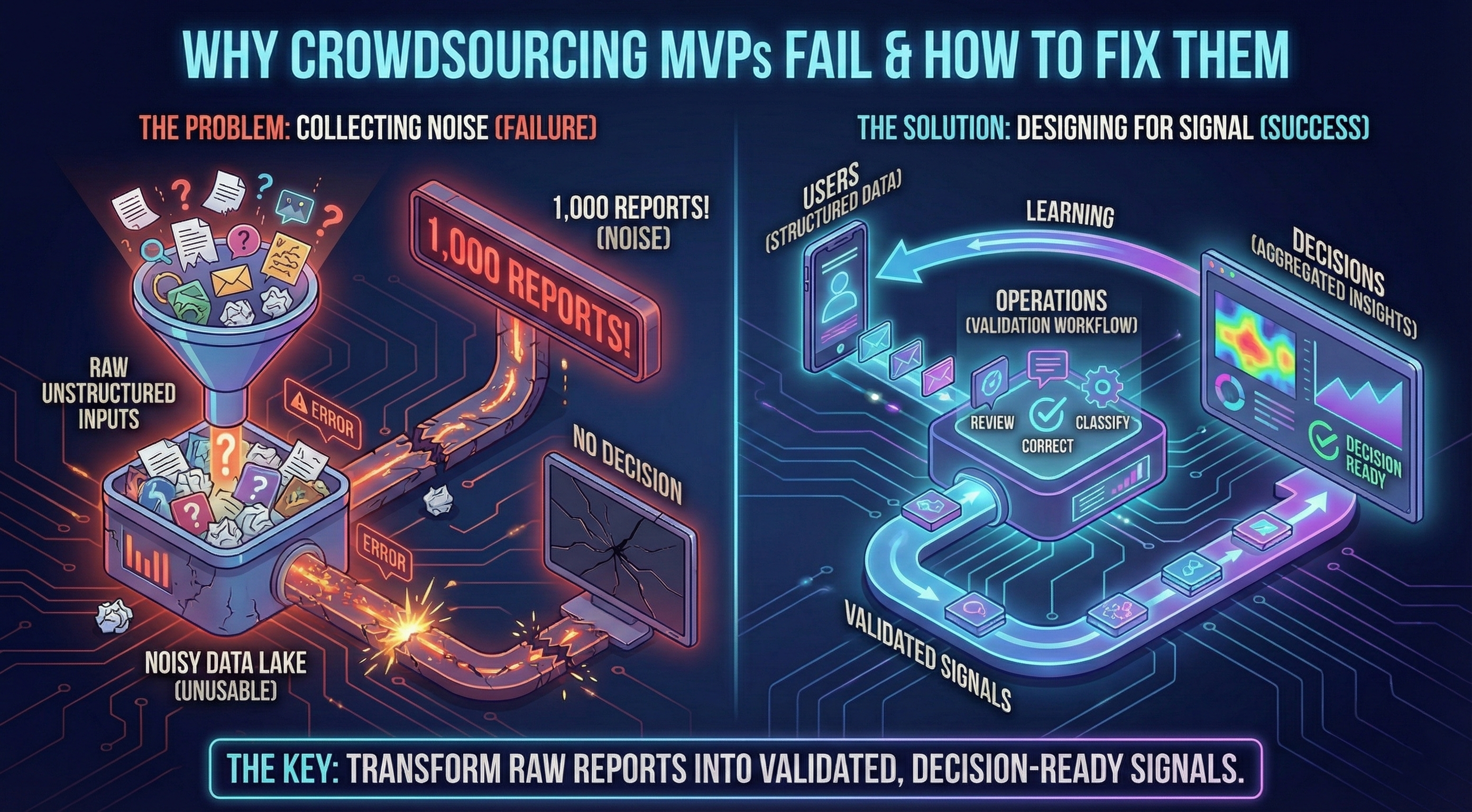

Most crowdsourcing platforms drown in their own data. This article explains why raw reports rarely become useful signals, and how to design the validation and aggregation layers that turn participation into decisions your team can actually act on.

Why Crowdsourcing Platforms Fail (And How to Fix Them)

Crowdsourcing sounds simple: ask people to report what they see, collect the data, and act on it.

In practice, most crowdsourcing MVPs fail—not because people don’t care, but because reports don’t automatically become decisions. Raw inputs create volume, not clarity. And without the right product structure, teams end up with noise instead of signal.

This article explores what usually goes wrong with crowdsourcing MVPs, and how to design them to produce reliable, decision-ready signals instead of just more data.

Why Most Crowdsourcing MVPs Fail

When crowdsourcing initiatives stall, the causes are often the same:

High friction at the wrong moment. Reporting takes too long, or requires registration when users are in the field. That 90-second window closes, and the sighting goes unreported.

No validation layer. Raw submissions are treated as truth. One bad report erodes trust. Ten bad reports kill the platform.

Operational blind spots. Teams focus on collecting data, but not on how it will be reviewed, corrected, and aggregated. Operators become bottlenecks. The database grows, but insight doesn’t.

“Fast MVP” shortcuts. Speed is prioritized without thinking about how the system will evolve once real usage starts. The first version ships quickly. The second version requires a rewrite.

> The biggest bottleneck in MVPs isn’t how fast you can write code. It’s how fast you can learn from real users. Most “fast MVPs” optimize for the wrong metric. (Read: MVP Roadmap—4 to 8 Weeks)

The result is predictable: a spike in early interest, followed by declining engagement and unusable data.

Reports Are Not Signals

A key misunderstanding in many MVPs is assuming that more reports automatically mean better insight.

They don’t.

A signal is something different. A signal is:

- Validated: confirmed by a human or a reliable process

- Repeatable: consistent enough to support patterns

- Interpretable at scale: aggregatable into territory-level or system-level insight

Without validation and structure, crowdsourced inputs remain anecdotal. They might look impressive in a spreadsheet, but they don’t support decisions.

Designing for signal means thinking beyond submission and asking:

> What needs to happen between a report and an action?

This is the gap where most crowdsourcing MVPs break. They focus on collecting inputs but never close the loop to turn those inputs into trustworthy, actionable data.

> Validation is what separates a demo from a real product. (Read: First MVP—Demo vs. Real Product)

Designing for Low Friction Without Killing Data Quality

Friction matters most at the moment of reporting. People are often outdoors, in a hurry, or unsure whether what they’re seeing is relevant.

A good crowdsourcing MVP reduces friction without sacrificing future reliability:

- Let users start reporting immediately (defer account creation)

- Keep the flow short and guided (3-5 inputs max)

- Capture media and location automatically (don’t ask users to type coordinates)

- Provide smart defaults (date = today, location = current GPS)

At the same time, structure matters. Lightweight guidance during input is what enables validation and aggregation later.

The trade-off isn’t “friction vs. quality.” It’s “unstructured speed vs. structured speed.” You can have both—if the product is designed intentionally.

> This is where accelerators can help—if used with guardrails. Speed without structure creates fragility. (AI as Accelerator, Not Autopilot)

The Missing Half: Operational Workflows

Most failed MVPs focus almost exclusively on the user-facing side.

But in crowdsourcing, the operator experience is just as important. Without clear workflows, every new report increases workload instead of insight.

Signal-first products make operational steps explicit:

- Review incoming reports (is this credible?)

- Correct or enrich metadata (fix species misidentification, adjust location)

- Classify and label consistently (habitat type, threat level, priority)

- Confirm or reject based on clear criteria

This is where noise is filtered out and trust is built—both internally and externally.

If your MVP doesn’t have a validation workflow, it’s not producing signals yet. It’s producing a database.

> Scoping an MVP means defining these workflows early, not treating them as “nice-to-have” features. (How to Scope an MVP)

Aggregation Turns Inputs Into Decisions

Individual reports rarely matter on their own.

What matters is what emerges in aggregate:

- Patterns over time (is spread accelerating?)

- Geographic hotspots (where should interventions focus?)

- Correlations with other contextual data (weather, land use, proximity to sensitive areas)

Maps, filters, heatmaps, and exports are not “nice-to-have features.” They are the mechanisms that turn validated data into something decision-makers can actually use.

If an MVP can’t answer questions at this level, it’s not producing signal yet.

A Real-World Example: EVOC

We applied these principles while building EVOC, a crowdsourcing platform designed to monitor Vespa orientalis sightings across Campania (Italy).

Instead of starting from features, the product was designed around a complete loop:

- Fast, low-friction field reporting via mobile app

- Structured validation and review for operators

- Aggregation through maps, filters, and exports for institutions

The goal was not to maximize the number of reports, but to produce trustworthy, decision-ready insights from the very first releases.

Key early results:

- <2 minutes to submit a report (field-tested)

- 85% completion rate (high for crowdsourcing MVPs)

- 120+ reports validated in the first 2 weeks of pilot usage

- 40% faster operator review vs. manual email/spreadsheet triage

Common Pitfalls (And How to Avoid Them)

If you’re considering a crowdsourcing MVP, watch out for these traps:

Shipping only the front-end and postponing validation “for later.” Validation isn’t a feature—it’s the product.

Collecting unstructured inputs that can’t be reliably aggregated. Structure enables scale.

Optimizing for volume metrics instead of signal quality. “We got 1,000 reports!” means nothing if 600 are noise.

Using accelerators without guardrails. Speed is good. Fragile foundations are expensive.

> When every fix breaks something else, it’s because the foundation wasn’t designed to evolve. (Why Every Fix Breaks Something Else)

Skipping measurement. If you can’t quantify signal quality and delivery speed, you’re guessing.

> Measuring MVP success requires both delivery metrics and product-side signals. (How to Measure MVP Success)

A good MVP doesn’t just move fast—it stays reversible and learnable.

What to Focus on in Your MVP

Instead of asking “What features should we build?”, try asking:

- What signals do we need to observe early?

- Where does noise enter the system?

- Which workflows turn inputs into insight?

- What shortcuts would create long-term costs?

These questions shape better MVPs than any checklist of features.

Final Thoughts

Crowdsourcing can be powerful—but only when products are designed to turn participation into clarity.

Signal-first MVPs close the loop between users, operations, and decisions. They prioritize learning over output, and structure over volume.

If you’re building a product where data quality, trust, and real-world impact matter, start there.

See Signal-First Design in Practice

Want to see exactly how these principles work in a real 8-week MVP?

👉 Read the full EVOC case study

How we turned raw wasp sightings into validated territorial intelligence—with <2min reporting time, 120+ validated reports, and 40% faster operator review.

Topics covered:

- Complete loop architecture (mobile + dashboard + validation)

- Trade-offs: friction vs. identity, speed vs. maintainability

- Metrics that matter: completion rate, review time, signal quality

- 8-week timeline breakdown

- Hardening roadmap: from test-ready to production-confident